Where Your Intelligence Lives Is a Strategic Choice

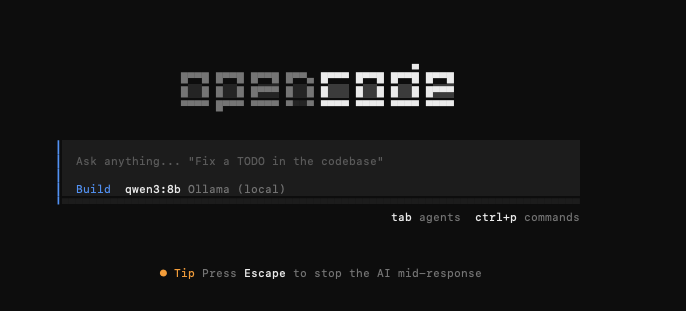

What you're actually choosing when you go local is bigger than cost reduction. You're making a structural decision about where your intelligence layer lives. Local LLMs mean your model has zero visibility into your proprietary codebase. Performance stays stable regardless of silent upstream model updates. Your engineering environment becomes owned end to end, with no third-party dependencies inside your development loop.